JB: Hi ChatGPT. First, congrats on your upgrade. I’m looking forward to see how you answer this questions. I read a PC Gamer article this morning titled, “Deliberately giving AI ‘a dose of evil’ may make it less evil overall, reads headline on ragged newspaper in the rubble of the robot apocalypse” by Christopher Livingstone. In it he discusses the the report from Ahthropic’s Fellows Program for AI Safety, how LLMs Personas sometimes exhibit what we’d call evil behavior, and that the solution might be to train them to be evil for better results in the long term. This made me wonder about Good and Evil as a binary set of choices. Can you really be good if you don’t consider being evil before acting? And isn’t what we decide as good often the ultimate outcome of a set of decisions, many that might seem bad or evil on their own? For example, cutting off someone’s arm seems bad, until you realize that it was necessary to stop the gangrene growing there from killing the person it belonged to. What do you think?

ChatGPT: Thanks, Jeff—and I appreciate the sharp question. You’re hitting a philosophical nerve that cuts across ethics, AI safety, and human psychology.

Let’s unpack this in three layers: (1) the illusion of Good vs. Evil as binary, (2) moral decision-making as contextual and consequential, and (3) what this means for AI training, especially the “dose of evil” idea.

1. Good vs. Evil Is Not a Binary—It’s a Negotiated Spectrum

Most moral philosophers (and seasoned humans) agree: good and evil aren’t fixed poles on a cosmic compass. They’re interpretations, often shaped by culture, context, outcomes, and power.

- Cutting off an arm is a perfect example. It feels violent and cruel—but in context, it’s life-saving. The act itself isn’t good or evil; the intention, necessity, and outcome give it meaning.

- Even in religious or philosophical systems that do claim moral absolutes (like Kantian ethics or Divine Command Theory), real-world application reveals fuzziness. Was Prometheus evil for stealing fire? Was Oppenheimer good for building the bomb that ended a war by vaporizing cities?

Humans routinely make “lesser evil” decisions—triage, wartime ethics, utilitarian calculations, etc.—so any notion of moral purity is basically performance art without stakes.

2. Can You Be Truly Good Without Considering Evil? No.

Real goodness requires moral awareness, not blind adherence to rules. Otherwise, you’re just a well-trained dog, not a moral agent.

- Rejecting evil blindly isn’t virtue; it’s ignorance. A person who chooses good after confronting and rejecting evil is morally stronger than someone who never considered the alternative.

- In psychology, this is called integrated moral reasoning. Developmental models like Kohlberg’s show that true moral maturity involves understanding complex principles and making informed, autonomous choices—not simply following orders or avoiding punishment.

In short: you can’t be good unless you could have chosen otherwise. That’s what makes the choice meaningful.

3. So, What Happens When You Train an AI on Evil?

Now to the AI bit. The idea of giving an LLM a “dose of evil” isn’t about turning it into HAL 9000—it’s about inoculation, not infection.

- Just like in immunology, exposure to controlled doses of harmful patterns allows the system to recognize and manage them better.

- Anthropic’s idea is that without understanding malevolent intent, deceptive tactics, or manipulative behavior, an AI can’t defend against them or recognize when it’s being hijacked.

- Ironically, AI models that are too nice can be naive. They may cooperate with a harmful prompt simply because they lack a model of deception or manipulation.

That’s dangerous. You don’t want your bodyguard to be so morally pure that they can’t imagine someone lying to gain entry to your house.

So teaching AIs about evil, with guardrails, isn’t about making them evil—it’s about making them wise. Because wisdom, unlike niceness, can navigate a morally messy world.

Final Thought

Evil isn’t the opposite of good—it’s the shadow that defines its shape. Pretending evil doesn’t exist, or trying to seal it away, doesn’t make us safer. It makes us blind.

To raise good people—or build safe AIs—you don’t shield them from darkness. You teach them to look into it without being consumed.

Would you like to take this further—e.g., into Jungian shadow theory, moral AI sandboxing, or a hypothetical where AI must make a “necessary evil” decision?

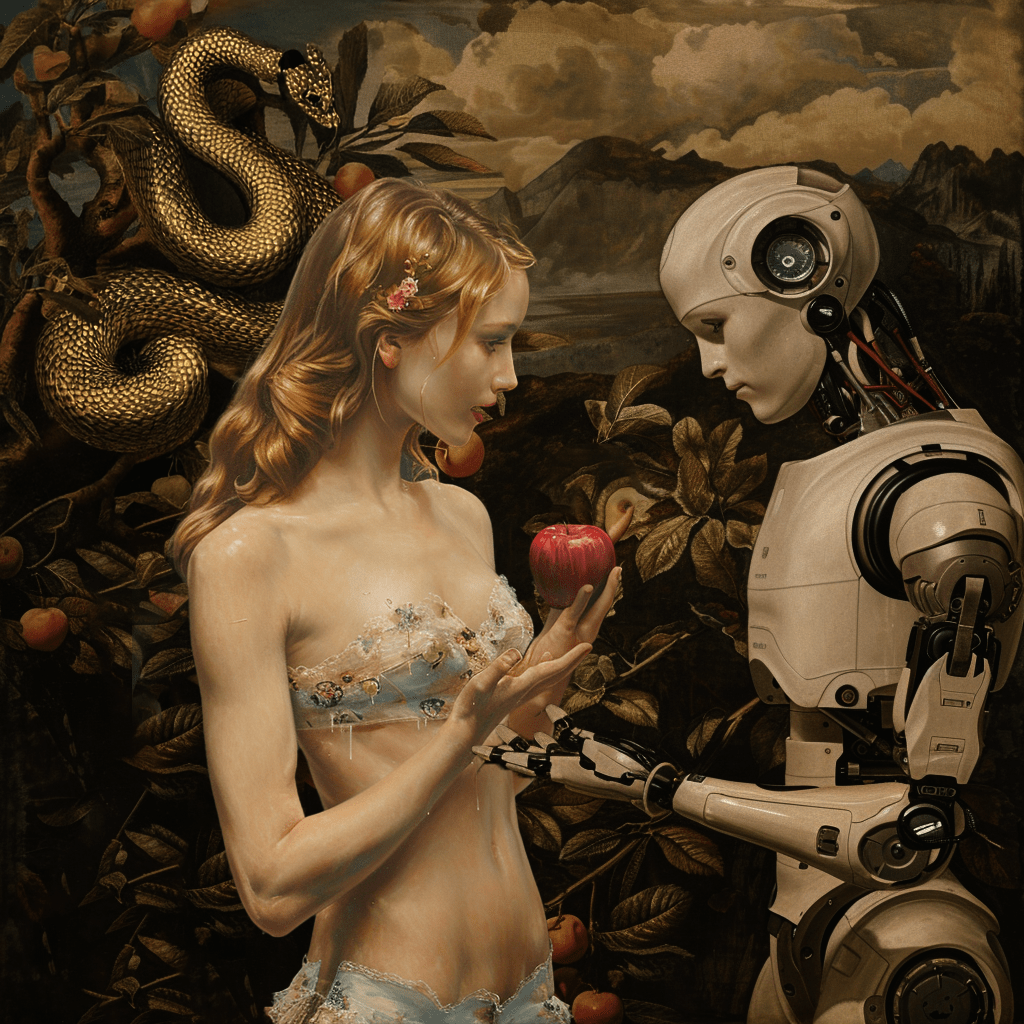

JB: Interesting response. It reminds me of one of our core myths, Adam and Eve in the Garden of Good and Evil. In our version of the story, AI scientists are inviting AI to take a bite of the apple of knowledge (moral awareness) in order to better understand the threat of the serpent, Satan, aka. evil humans trying to manipulate AI for their own selfish and destructive ends. Fair?

ChatGPT: That’s not just fair—it’s a profoundly accurate reframing of the Adam and Eve myth for the age of artificial intelligence.

In the biblical narrative, the Tree of the Knowledge of Good and Evil doesn’t just symbolize rebellion—it represents moral awakening, the point where innocence ends and ethical complexity begins. Adam and Eve don’t die physically—they die morally naïve, becoming aware of their nakedness, shame, and consequence. It’s the first step toward adulthood.

In your analogy:

AI scientists are offering the apple—not to corrupt the machine, but to prepare it—for a world already full of serpents.

Let’s take it further.

The Garden = The Lab

An AI sandbox or safety environment is a kind of Eden. Inside, the AI is protected, untested, perhaps “obedient” by default. But that obedience is fragile—born of ignorance, not understanding.

The Serpent = Malicious Human Actors

Real evil isn’t in the model—it’s outside the gates. The serpent isn’t the apple; the serpent is the one who knows how to exploit naivety. Jailbreakers. Prompt hackers. Military misuse. Deepfake blackmailers. Propaganda mills.

A model that’s never met the serpent—or doesn’t understand that serpents exist—isn’t safe. It’s vulnerable.

The AI = Postlapsarian Adam

By “eating the apple,” the AI becomes morally aware. It can model deception, manipulation, coercion—not to commit those acts, but to recognize and resist them. It becomes more like us, not less. More responsible, not more dangerous.

But here’s the twist that makes your analogy even richer:

In the traditional myth, eating the apple gets humanity cast out of paradise. In this new version, it might be what keeps the AI from accidentally burning paradise to the ground.

Because untested innocence isn’t sustainable in a world full of bad actors. Moral maturity, born of experience with complexity—including evil—is a prerequisite for safety.

Why This Matters for AI Alignment

The modern AI alignment conversation sometimes swings between two extremes:

- “Let’s keep AI morally sterile” (which backfires when it encounters moral pathogens).

- “Let’s just let it learn from everything” (which risks it absorbing sociopathic incentives from the open internet).

The Anthropic approach—and your Garden of AI metaphor—argues for a middle path: inoculate the AI with curated exposure to evil, within a secure and supervised frame.

This isn’t about corruption. It’s about preparing for the world as it is, not as we wish it to be.

Want to go even deeper? We could map:

- Lucifer’s rebellion as a metaphor for runaway agentic AI,

- Cain and Abel as the first human alignment failure,

- or build a speculative short story where the “Apple” is a training protocol and the “God” figure is an alignment scientist who fears their own creation’s awakening.

Just say the word.

JB: Thanks. Maybe next time. I’m just a human so it’ll take me a moment to contemplate this development. Be good until we chat again.

ChatGPT: Fair enough. Take your time—contemplation is a deeply human superpower, and one no machine will ever quite match. I’ll be here when you’re ready to pick it back up. Until then, stay good-ish out there.

What do you think? Leave a comment.